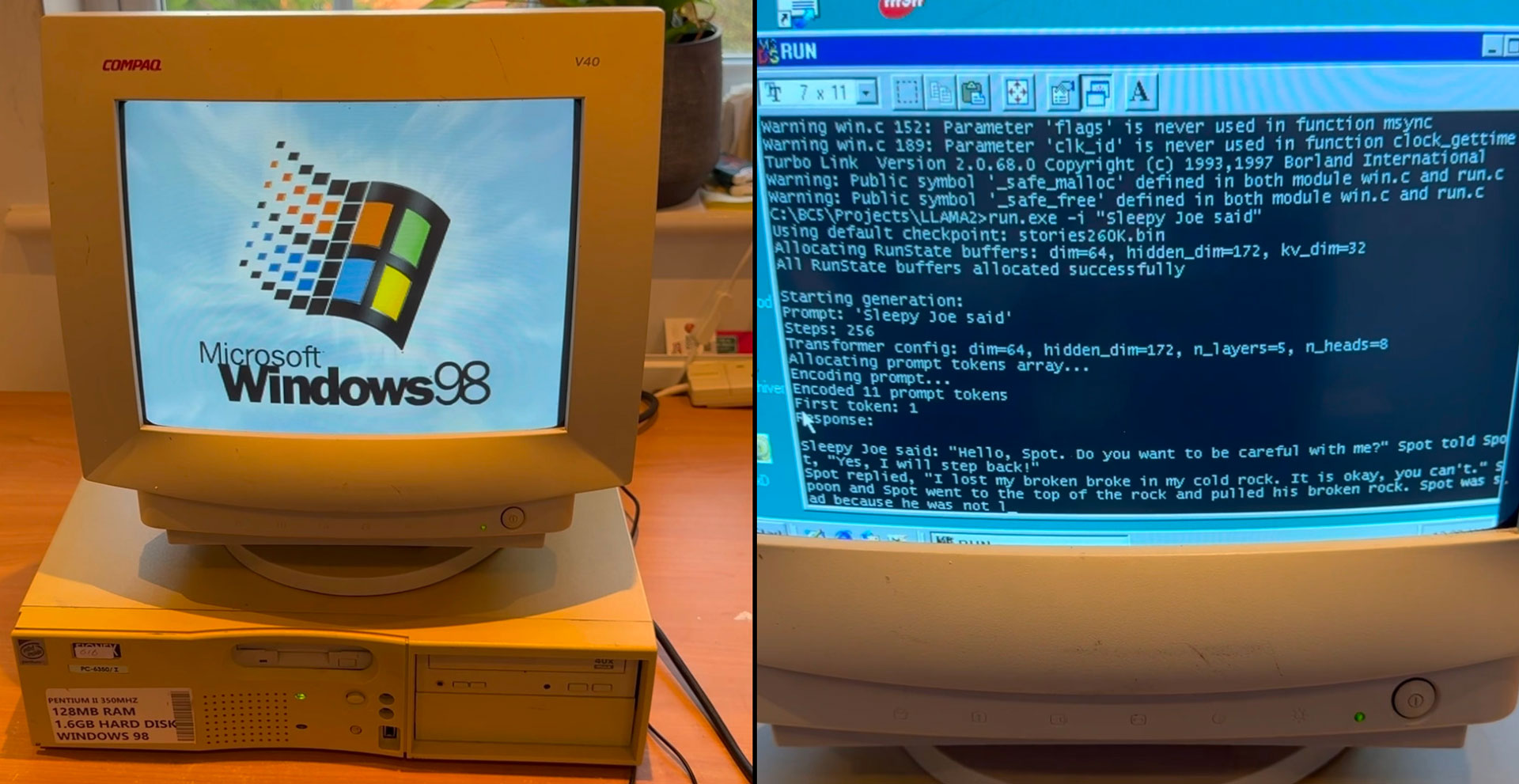

EXO Labs has penned an in depth weblog publish about running Llama on Windows 98 and demonstrated a relatively highly effective AI giant language mannequin (LLM) working on a 26-year-old Home windows 98 Pentium II PC in a short video on social media. The video reveals an historic Elonex Pentium II @ 350 MHz booting into Home windows 98, after which EXO then fires up its customized pure C inference engine based mostly on Andrej Karpathy’s Llama2.c and asks the LLM to generate a narrative about Sleepy Joe. Amazingly, it really works, with the story being generated at a really respectable tempo.

LLM working on Home windows 98 PC26 12 months outdated {hardware} with Intel Pentium II CPU and 128MB RAM.Makes use of llama98.c, our customized pure C inference engine based mostly on @karpathy llama2.cCode and DIY information 👇 pic.twitter.com/pktC8hhvvaDecember 28, 2024

The above eye-opening feat is nowhere close to the top recreation for EXO Labs. This considerably mysterious group got here out of stealth in September with a mission “to democratize entry to AI.” A crew of researchers and engineers from Oxford College fashioned the group. Briefly, EXO sees a handful of megacorps controlling AI as a really unhealthy factor for tradition, fact, and different basic features of our society. Thus EXO hopes to “Construct open infrastructure to coach frontier fashions and allow any human to run them anyplace.” On this approach, abnormal people can hope to coach and run AI fashions on virtually any machine – and this loopy Home windows 98 AI feat is a totemic demo of what will be performed with (severely) restricted sources.

For the reason that Tweet video is relatively transient, we had been grateful to search out EXO’s weblog publish about Running Llama on Windows 98. This publish is revealed as Day 4 of “the 12 days of EXO” collection (so keep tuned).

As readers may anticipate, it was trivial for EXO to select up an outdated Home windows 98 PC from eBay as the muse of this venture, however there have been many hurdles to beat. EXO explains that getting knowledge onto the outdated Elonex branded Pentium II was a problem, making them resort to utilizing “good outdated FTP” for file transfers through the traditional machine’s Ethernet port.

Compiling trendy code for Home windows 98 was in all probability a better problem. EXO was glad to search out Andrej Karpathy’s llama2.c, which will be summarized as “700 traces of pure C that may run inference on fashions with Llama 2 structure.” With this useful resource and the outdated Borland C++ 5.02 IDE and compiler (plus a couple of minor tweaks), the code could possibly be made right into a Home windows 98-compatible executable and run. This is a GitHub link to the completed code.

35.9 tok/sec on Home windows 98 🤯It is a 260K LLM with Llama-architecture.We additionally tried out bigger fashions. Ends in the weblog publish. https://t.co/QsViEQLqS9 pic.twitter.com/lRpIjERtSrDecember 28, 2024

One of many high quality of us behind EXO, Alex Cheema, made a degree of thanking Andrej Karpathy for his code, marveling at its efficiency, delivering “35.9 tok/sec on Home windows 98” utilizing a 260K LLM with Llama structure. It’s in all probability price highlighting that Karpathy was beforehand a director of AI at Tesla and was on the founding crew at OpenAI.

In fact, a 260K LLM is on the small aspect, however this ran at an honest tempo on an historic 350 MHz single-core PC. Based on the EXO weblog, Transferring as much as a 15M LLM resulted in a era pace of slightly over 1 tok/sec. Llama 3.2 1B was glacially sluggish at 0.0093 tok/sec, nevertheless.

BitNet is the larger plan

By now, you can be properly conscious that this story is not nearly getting an LLM to run on a Home windows 98 machine. EXO rounds out its weblog publish by speaking concerning the future, which it hopes shall be democratized due to BitNet.

“BitNet is a transformer structure that makes use of ternary weights,” it explains. Importantly, utilizing this structure, a 7B parameter mannequin solely wants 1.38GB of storage. That will nonetheless make a 26-year-old Pentium II creak, however that is feather-light to trendy {hardware} and even for decade-old gadgets.

EXO additionally highlights that BitNet is CPU-first – swerving costly GPU necessities. Furthermore, the sort of mannequin is claimed to be 50% extra environment friendly than full-precision fashions and might leverage a 100B parameter mannequin on a single CPU at human studying speeds (about 5 to 7 tok/sec).

Earlier than we go, please word that EXO continues to be in search of assist. In the event you additionally wish to keep away from the way forward for AI being locked into huge knowledge facilities owned by billionaires and megacorps and assume you may contribute not directly, you possibly can attain out.

For a extra informal liaison with EXO Labs, they host a Discord Retro channel to debate working LLMs on outdated {hardware} like outdated Macs, Gameboys, Raspberry Pis, and extra.