[ad_1]

At FMS 2024, Phison devoted vital sales space house to their enterprise / datacenter SSD and PCIe retimer options, along with their consumer products. As a controller / silicon vendor, Phison had traditionally been working with drive companions to deliver their options to the market. On the enterprise aspect, their tie-up with Seagate for the X1 series (and the following Nytro-branded enterprise SSDs) is kind of well-known. Seagate equipped the necessities checklist and had a say within the remaining firmware earlier than qualifying the drives themselves for his or her datacenter prospects. Such qualification entails a big useful resource funding that’s attainable solely by giant firms (ruling out many of the tier-two shopper SSD distributors).

Phison had demonstrated the Gen 5 X2 platform eventually yr’s FMS as a continuation of the X1. Nevertheless, with Seagate specializing in its HAMR ramp, and in addition combating other battles, Phison determined to go forward with the qualification course of for the X2 course of themselves. Within the greater scheme of issues, Phison additionally realized that the white-labeling method to enterprise SSDs was not going to work out in the long term. Because of this, the Pascari model was born (ostensibly to make Phison’s enterprise SSDs extra accessible to finish customers).

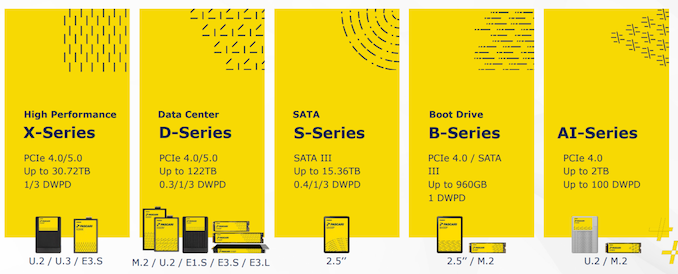

Below the Pascari model, Phison has completely different lineups focusing on completely different use-cases: from high-performance enterprise drives within the X sequence besides drives within the B sequence. The AI sequence is available in variants supporting as much as 100 DWPD (extra on that within the aiDAPTIVE+ subsection beneath).

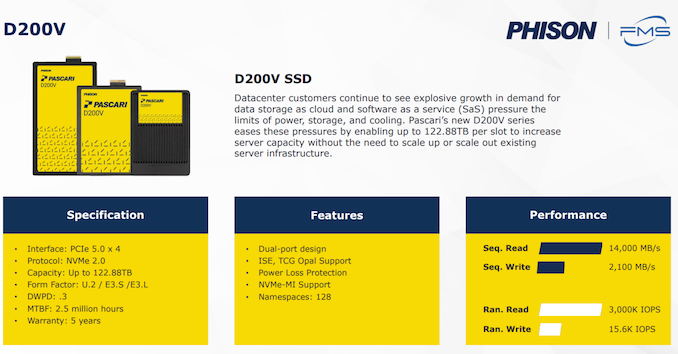

The D200V Gen 5 took pole place within the displayed drives, due to its main 61.44 TB capability level (a 122.88 TB drive can be being deliberate underneath the identical line). The usage of QLC on this capacity-focused line brings down the sustained sequential write speeds to 2.1 GBps, however these are meant for read-heavy workloads.

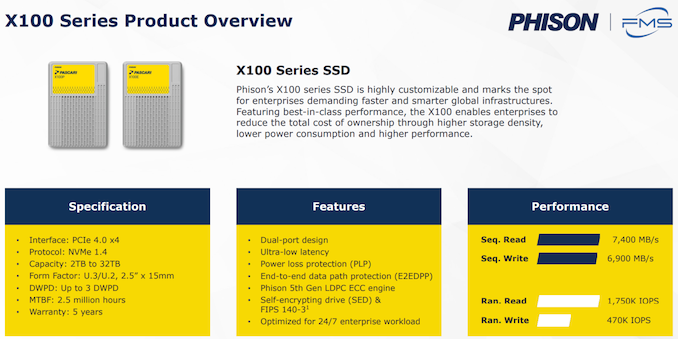

The X200, alternatively, is a Gen 5 eTLC drive boasting as much as 8.7 GBps sequential writes. It is available in read-centric (1 DWPD) and combined workload variants (3 DWPD) in capacities as much as 30.72 TB. The X100 eTLC drive is an evolution of the X1 / Seagate Nytro 5050 platform, albeit with newer NAND and bigger capacities.

These drives include all the standard enterprise options together with power-loss safety, and FIPS certifiability. Although Phison did not promote this particularly, newer NVMe options like versatile information placement ought to change into a part of the firmware options sooner or later.

100 GBps with Twin HighPoint Rocket 1608 Playing cards and Phison E26 SSDs

Although not strictly an enterprise demo, Phison did have a station displaying 100 GBps+ sequential reads and writes utilizing a standard desktop workstation. The trick was putting in two HighPoint Rocket 1608A add-in cards (every with eight M.2 slots) and inserting the 16 M.2 drives in a RAID 0 configuration.

HighPoint Expertise and Phison have been working collectively to qualify E26-based drives for this use-case, and we can be seeing extra on this in a later assessment.

aiDAPTIV+ Professional Suite for AI Coaching

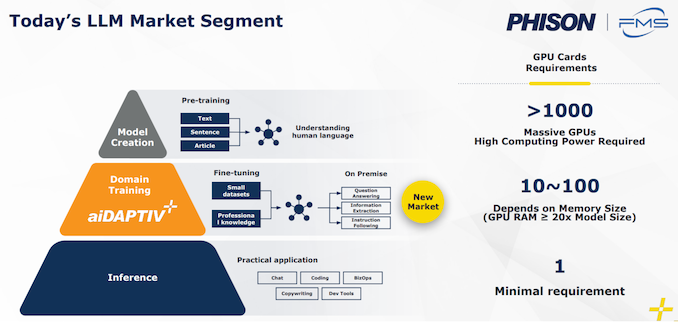

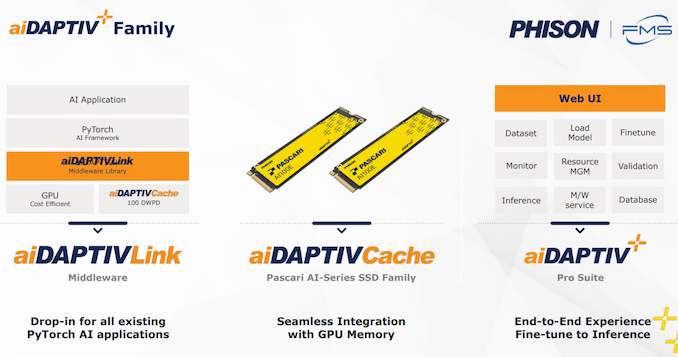

One of many extra fascinating demonstrations in Phison’s sales space was the aiDAPTIV+ Professional suite. Ultimately yr’s FMS, Phison had demonstrated a 40 DWPD SSD to be used with Chia (fortunately, that fad has pale). The corporate has been engaged on the acute endurance facet and moved it as much as 60 DWPD (which is normal for the SLC-based cache drives from Micron and Solidigm).

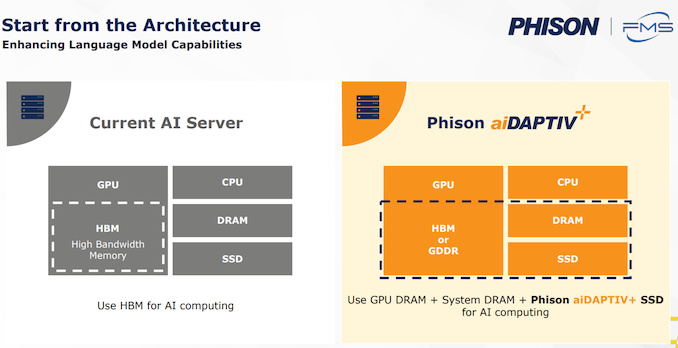

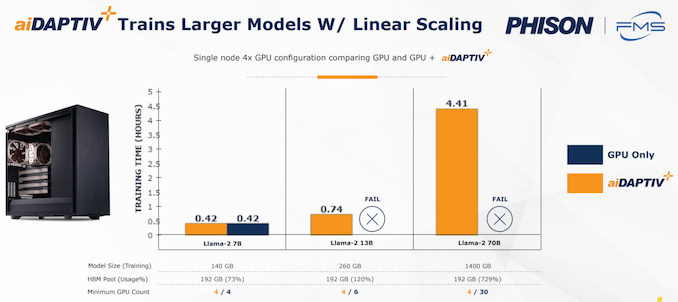

At FMS 2024, the corporate took this SSD and added a middleware layer on prime to make sure that workloads stay extra sequential in nature. This drives up the endurance score to 100 DWPD. Now, this middleware layer is definitely a part of their AI coaching suite focusing on small enterprise and medium enterprises who don’t have the finances for a full-fledged DGX workstation, or for on-premises fine-tuning.

Re-training fashions by utilizing these AI SSDs as an extension of the GPU VRAM can ship vital TCO advantages for these firms, because the expensive AI training-specific GPUs could be changed with a set of comparatively low-cost off-the-shelf RTX GPUs. This middleware comes with licensing points which can be primarily tied to the acquisition of the AI-series SSDs (that include Gen 4 x4 interfaces at the moment in both U.2 or M.2 form-factors). The usage of SSDs as a caching layer can allow fine-tuning of fashions with a really giant variety of parameters utilizing a minimal variety of GPUs (not having to make use of them primarily for his or her HBM capability).

[ad_2]